Synit and SqueakPhone demo video

I’ve just finished a demo of the SqueakPhone and Synit running on real phones. You can see it on youtube, or watch it embedded directly below from an mp4 file hosted on this webserver:

Journal entries

An

Atom feed of these posts is also available.

I’ve just finished a demo of the SqueakPhone and Synit running on real phones. You can see it on youtube, or watch it embedded directly below from an mp4 file hosted on this webserver:

I’ve just published an essay called “Breaking Down the System Layer” as part of the Synit manual. The essay surveys a few realizations of the idea of a “system layer” in free (and, to a limited extent, proprietary) operating systems, analysing their components and classifying and categorising their functionality.

One of my projects over the past couple of years has been Synit, a

Syndicate init (really, systemd and D-Bus) replacement. Synit underpins another

project, #squeak-phone, a sketch of an alternative to Android for personal computing on a

mobile device.

These are one-person projects at present, and the current round of development is coming to a close. This is a summary of where things stand.

Goals. Aim for a hackable, tweakable, pocket-sized Smalltalk system that’s also a phone. Also, see what happens if we replace

systemd,D-Busetc with Syndicate-style alternatives.Outcomes. It runs! Smalltalk on a phone. Weird but fun alternative universe, not Android, not systemd. You can make calls, send/receive SMS, use cellular data, WIFI, look at maps, etc. No browser yet.

Synit on PostmarketOS boot splash screen.

Synit on PostmarketOS boot splash screen.

Synit is my experimental alternative “system layer” for Linux. I chose to use my #squeak-phone project as the initial vehicle for exploring the idea.

Squeak-phone uses the Syndicated Actor Model in conjunction with Squeak Smalltalk and a few other bits of software to have a foundation for exploring an alternative universe of mobile personal computing.

I’ve written a few posts about it both on this site and on eighty-twenty.org). This page outlines the portion of the project that was funded by NLNet’s NGI Zero. (Thanks, NLNet!) The Synit project has a homepage at synit.org now, with links to documentation and code. Common Syndicate parts of the project of course remain here at syndicate-lang.org and in the Syndicate git repositories.

The prototype Synit system is based on postmarketOS, which is an

Alpine Linux distribution for cellphones. Synit adds a few

custom Alpine packages, one of

which

does unspeakable things to the normal postmarketOS packages to outright replace /sbin/init

with a Syndicate replacement.

The first thing the Syndicated PID 1 does is start a syndicate-server instance as the root system bus, which then uses Erlang-style supervision patterns to spawn the rest of the system.

After booting, the most-recently-saved Smalltalk image comes back to life.

After booting, the most-recently-saved Smalltalk image comes back to life.

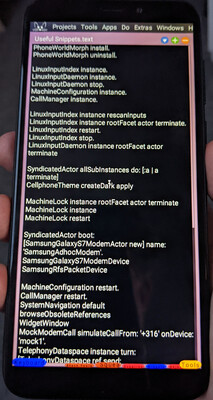

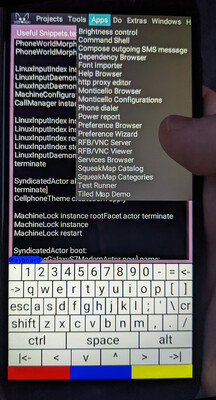

One of the actors started by the system bus is a Squeak Smalltalk image. The photo here shows the Smalltalk image just after the phone completes booting: it has a Smalltalk workspace visible, containing useful snippets of Smalltalk code for interacting with the phone that I can execute with the tap of a (couple of) finger(s).

It’s a regular Smalltalk image, so I can make changes to the code, save snapshots, and so on. When I reboot the phone, the most recent image snapshot is loaded.

Both PID 1 and syndicate-server are written in Rust, but system actors can be written in any language that speaks the Syndicate protocol; this currently means TypeScript/JavaScript, Bash (!), Smalltalk, Python, Nim, Rust, and Racket.

The system is reactive. It self-organises around the information placed in the system dataspace. Schemas are used to strongly (dynamically) type the system. Object-capabilities are used to secure the system. Various system actors react to changes in the machine’s environment, such as (dis)appearance of WIFI SSIDs, hotplugging of devices, and so on.

For example, placing a record

<configure-interface "lo" <static "127.0.0.1/8">> into the dataspace triggers an actor that

uses the ip command-line tool to set up the interface. Removing the record causes the same

actor to use ip again to tear the interface down. Likewise, addition of <configure-interface

"eth0" <dhcp>> causes startup of a DHCP client daemon; subsequent removal terminates the

daemon and removes the interface.

One of the schemas, ui.prs, describes a Syndicate protocol for user interfaces. It’s a very rough sketch at the moment, and woefully underdocumented - but here are a few “applications” written using it:

The dialer screen.

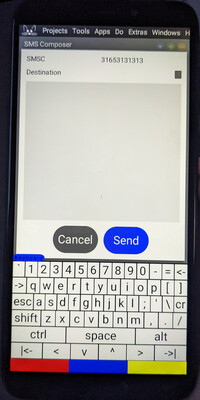

The dialer screen. The SMS composition window.

The SMS composition window. The brightness control.

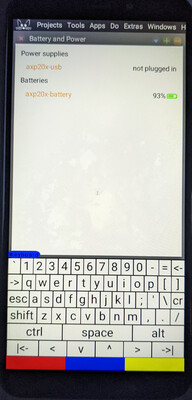

The brightness control. The battery and power display.

The battery and power display.Each application is a Syndicate actor running either inside the Smalltalk image or as a neighbouring process on the system, interacting with a Smalltalk UI daemon that speaks the protocol defined in ui.prs.

The UI-presenting actors are separate from the actors that manage the underlying information, such as the brightness status and control, or the details of the system’s current power status.

The on-screen keyboard is a simple Smalltalk program. The red, blue, and yellow rectangles beneath the keyboard are shift-like keys used to change the interpretation of a finger tap: they correspond to left, middle, and right-clicks, respectively.

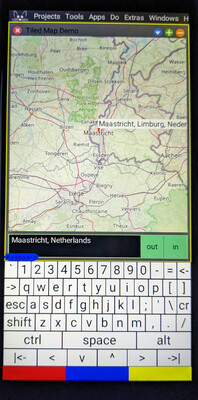

A tiled-map (“slippy map”) widget.

A tiled-map (“slippy map”) widget.

The placeholder “apps” menu, including both SqueakPhone and ordinary Squeak “applications”.

The placeholder “apps” menu, including both SqueakPhone and ordinary Squeak “applications”.

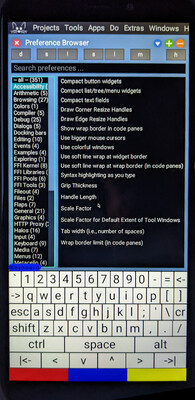

The ordinary Squeak preference browser.

The ordinary Squeak preference browser.

A little Smalltalk program wraps a TiledMapMorph I wrote a few years ago to provide a “map” application, backed by OpenStreetMap by default.

The system enables cellular data by default, so the maps can be used when you’re out and about. You can configure WIFI SSID/password information, too, but you have to do it using a text editor at the moment. There’s only one of me!

Finally, it’s still very much a regular Smalltalk system, with only the lightest of customisation for handheld use. All the normal Squeak “applications” are available, such as version control, code editing, font management, preference settings etc.

I think the experiment so far has been a success.

The big reusable pieces, likely to find application in other projects besides the continuation of this one, are Preserves, Preserves Schema, the Syndicated Actor Model itself, and the syndicate-server program.

The idea of synit—using Syndicate as a system layer, replacing D-Bus, systemd and so on—has

worked out really well, and I want to try it out for desktop and server systems, too.

Smalltalk-on-a-phone is also really promising, and it’s super fun to be able to hack about with the phone without needing a separate desktop/laptop system to develop on. However, careful design of both the user interfaces as well as the underlying system protocols will be crucial to actually make a usable and securable system. The system-layer stuff is simple in comparison.

Lots remains to be done. I’m looking forward to it!

Last post for today! Now that the protocol specification, the Synit website, the Synit manual, and the Synit codebase are all published and live, the final piece of the puzzle is the SqueakPhone codebase.

It comes in two parts:

Follow the instructions in the README to give it a try.

It’s quite likely the instructions are not detailed enough, or that I’ve accidentally left the code in a “works on my machine but only on my machine” state, so if you try it out and it doesn’t work, or you have questions, please stop by on IRC or just email me directly.

I’m really pleased to announce the first public release of Synit. From the site:

Synit is an experiment in applying pervasive reactivity and object capabilities to the System Layer of an operating system for personal computers, including laptops, desktops, and mobile phones. Its architecture follows the principles of the Syndicated Actor Model.

Synit is the foundation of the squeak-on-a-cellphone system-layer experiment I’ve been working on for the past year or so.

To go along with the software, there’s a draft manual, including

an essay giving a good overview of the Syndicated Actor Model;

a quick description of the architecture of the system;

the protocol specification that I just announced;

and more.

Come hang out on IRC! Or, just email me directly.

I’ve just published the first public draft of the Syndicate Network Protocol specification.

It has already been implemented for Rust, TypeScript, Python 3, Racket and Squeak: see the implementations page.

Feedback, comments, and criticism very welcome! Just email me.

Patrick Dubroy recently posted an interesting challenge problem:

The goal is to implement a square that you can either drag and drop, or click. The code should distinguish between the two gestures: a click shouldn’t just be treated as a drop with no drag. Finally, when you’re dragging, pressing escape should abort the drag and reset the object back to its original position.

In his post, he gives three solutions. A few days later, Francisco Sant’Anna posted an additional take on the problem using Céu. Later still, Francisco wrote another post comparing and contrasting various approaches to concurrency including Céu and Martin Sústrik’s idea of Structured Concurrency.

Since Syndicate is similar both to Structured Concurrency and to synchronous languages like Céu, Francisco’s post was enough for me to get around to trying to solve Patrick’s challenge problem using Syndicate.

I’ve put a complete project up here; you can clone it with

1

git clone https://git.syndicate-lang.org/tonyg/dubroy-user-input

You can try the program out by clicking here.

The interesting bit is the part that implements the main state machine, function awaitClick,

so in the rest of this post I’ll look into that in some detail. To see how the whole program

hangs together, visit the index.ts file in the git

repository.

Each state is represented as a Syndicate facet. We kick things off with

1

2

3

react {

awaitClick('Waiting');

}

which enters a fresh facet and sets up its behaviour using awaitClick:

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

function awaitClick(status: string) {

assert Status(status);

on message Mouse('down', $dX: number, $dY: number) => {

if (inBounds(dX - x.value, dY - y.value)) {

stop {

react {

assert Status('Intermediate');

const [oX, oY] = [x.value, y.value];

stop on message Mouse('up', _, _) => react {

awaitClick('Clicked!');

}

stop on message Key('Escape') => react {

awaitClick('Cancelled!');

}

stop on message Mouse('move', $nX: number, $nY: number) => react {

assert Status('Dragging');

stop on message Mouse('up', _, _) => react {

awaitClick('Dragged!');

}

stop on message Key('Escape') => react {

[x.value, y.value] = [oX, oY];

awaitClick('Cancelled!');

}

[x.value, y.value] = [oX + nX - dX, oY + nY - dY];

on message Mouse('move', $nX: number, $nY: number) => {

[x.value, y.value] = [oX + nX - dX, oY + nY - dY];

}

}

}

}

}

}

}

When the mouse button is pressed (line 3), if the press is inside the square (line 4), we transition (lines 5–6) to a new state (lines 7–28).

This “intermediate” state is responsible for disambiguating clicks and drags. If we get a mouse up event, we transition back to waiting for a click (lines 9–11). If we get an Escape keypress, we do the same (lines 12–14). If we get some mouse motion, however, we transition (line 15) to a third state (lines 16–27).

This “drag” state tracks the mouse as it moves (lines 24–27). If the mouse button is released,

we go back to waiting for a click (lines 17–19). If Escape is pressed (lines 20–23), we

transition back to awaitClick state after resetting the square’s position (line 21).

Syndicate dataflow variables hook up changes to x.value and y.value to the position of the

square (see here in the full

program),

and the Status(...) assertions are reflected onto the screen by a little auxiliary actor

(here in the full

program).

The idiom

1

stop on <Event> => react { ... }

seen on lines 9, 12, 15, 17, and 20, acts as a state transition: the stop keyword causes the

active facet to terminate when the event is received, and the react statement after =>

enters a new state.1

And that’s it! Hopefully I’ve managed to convey the idea.

I considered a few variations on this implementation as I was writing it. Originally, I omitted lines 12–14 above, because I read the specification as requiring a response to Escape only within a drag, not the intermediate state before it’s known whether a press corresponds to a click or a drag. Another variation was less state-machine-like and more statechart-like, with Escape handling in an outer state and mouse handling in a pair of inner states, but it wasn’t quite as clear as the variation above.

An equivalent way to write this idiom is on <Event> => stop {

react { ... } }. I’m considering introducing new syntax, transition { ... } for the

stop { react { ... } } part, so you could write on <Event> => transition { ... }. ↩

I’ve just released SyndicatedActors, a package for Squeak Smalltalk that implements the

Syndicated Actor Model.

You can get it from the project page on SqueakSource:

The code depends on the Preserves and BTree-Collections support packages. Preserves is

available from its SqueakSource project page, and

BTree-Collections is included in the SyndicatedActors project.

To do all these installation steps from within a running Squeak image:

1

2

3

4

5

Installer ss project: 'Preserves';

install: 'Preserves'.

Installer ss project: 'SyndicatedActors';

install: 'BTree-Collections';

install: 'SyndicatedActors'.

Happy New Year!

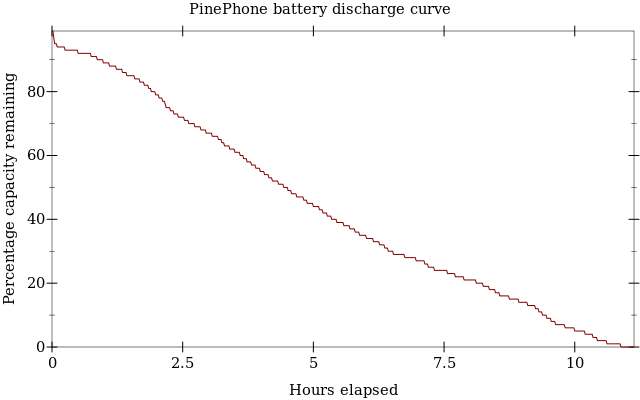

I’ve been using the PinePhone 1.2 to develop synit recently. I haven’t done anything about power management or sleeping yet, and I wondered how bad the battery life might be, so last night I ran the following script from a full battery until the phone shut down from lack of power:

1

2

3

4

5

6

7

while true

do

date

cat /sys/class/power_supply/axp20x-battery/capacity

sleep 60

sync

done | tee -a battery-rundown-log

Here is the resulting discharge curve (data):

Before running the script, I turned off the display and backlight,

1

2

3

4

5

6

# Puts the framebuffer in "graphics" mode, disabling touchscreen

# sensitivity and "screen saver" mode

echo 1 | sudo tee /sys/class/graphics/fb0/state

# Actually blanks the screen, shutting down the graphics pipeline

echo 3 | sudo tee /sys/class/graphics/fb0/blank

and almost nothing was running on the phone: normal system daemons (udevd, syslogd,

dbus-daemon, haveged, chronyd), wifi support (wpa_supplicant and NetworkManager),

modem support (eg25-manager and gpsd), sshd, and a lone getty.

This, then, is about as good as we might reasonably expect from an idle system, with its display blanked, that never goes into sleep mode: around ten or eleven hours of battery life.

Putting the phone into sleep mode should significantly extend the battery life.

I plan on running Squeak Smalltalk for the user interface, and I suspect that there’ll be a fair amount of power draw as a result of that, so it seems likely I’ll have to investigate putting the phone into sleep mode.

Lots and lots has happened since the last update!

The big-ticket items each get a blog post of their own: